Continuing in the vein of paren-free, I’d like to present a refreshed vision of JavaScript Harmony. This impressionist exercise is of course not canonical (not yet), but it’s not some random, creepy fanfic either. Something like this could actually happen, likelier and better if done with your help (more on how at the end).

I’m blurring the boundaries between Ecma TC39’s current consensus-Harmony, straw proposals for Harmony that some on TC39 favor, and my ideas. On purpose, because I think JS needs some new conceptual integrity. It does not need play-it-safe design-by-committee, either of the “let’s union all proposals” kind (which won’t fly on TC39), or a blind “let’s intersect proposals and if the empty set remains, so be it” approach (which also won’t fly, but it’s the likelier bad outcome).

Anyway, it’s my blog, and my current dream. I hope you like it. Talk and dream back at me, and with any luck we’ll build a better Harmony-in-reality.

little languages

Calling JavaScript a little language is polite but false at this late date: ES5.1 weighs in at over 100,000 words, with hundreds of nonterminals in the lexical and syntactic grammars.

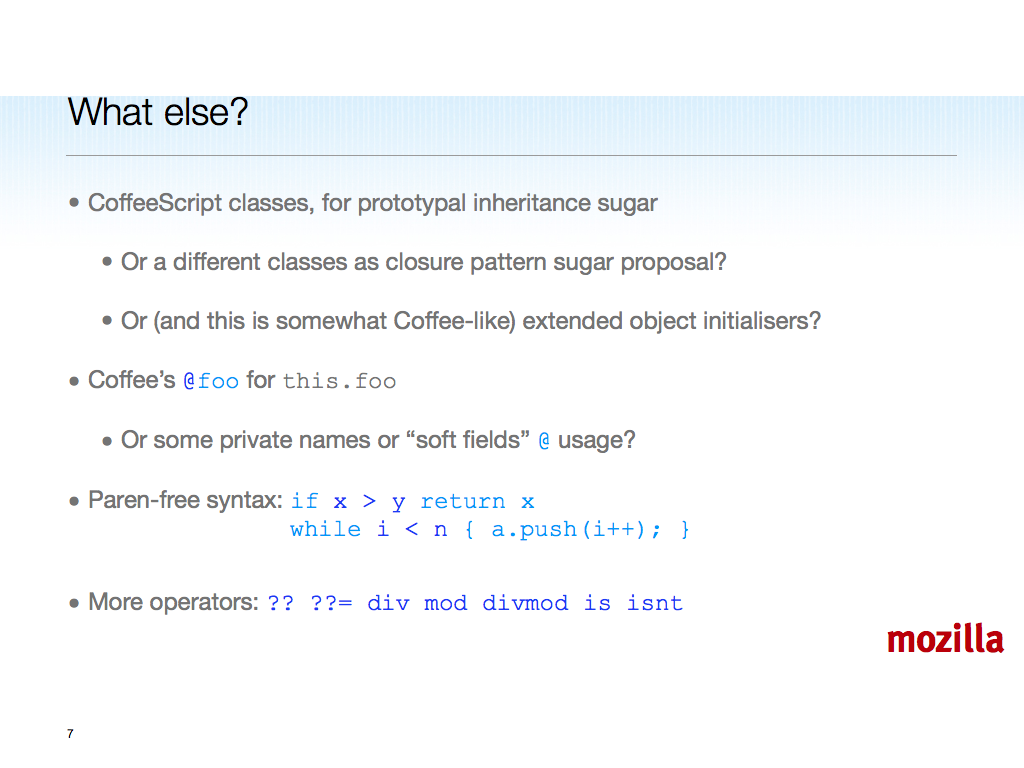

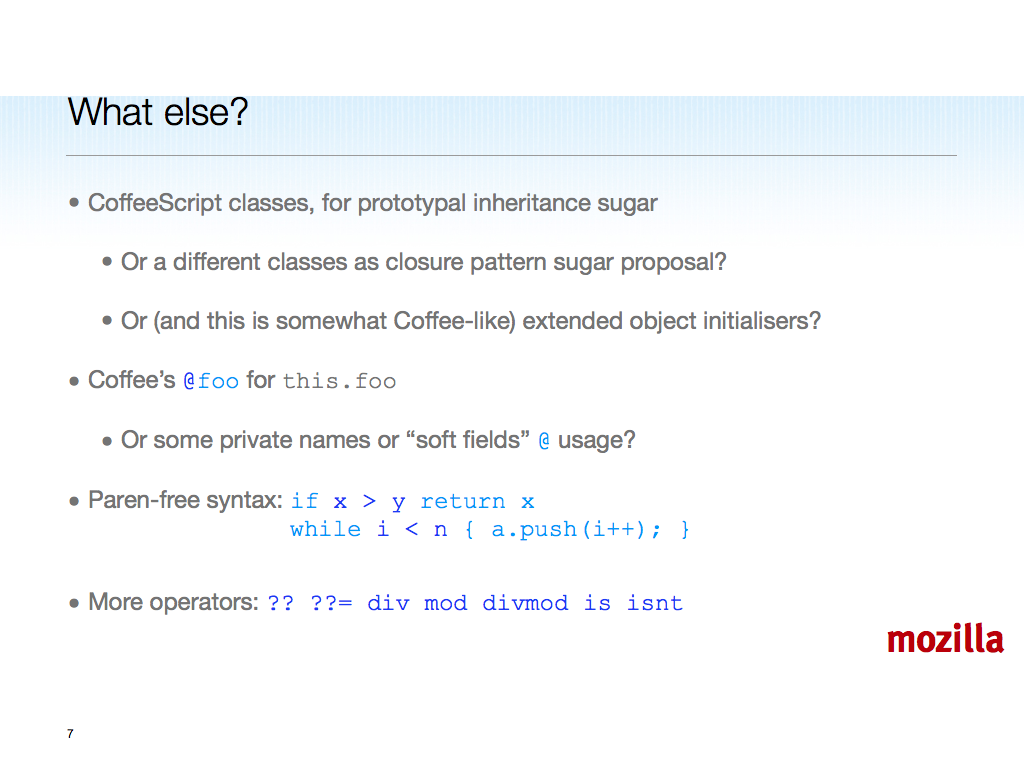

I would say that the same goes for CoffeeScript, although I get the point in its use of the phrase “little language”: concise expression-language sugar for the lever-arm, using JS-in-full as implemented in all browsers as the fulcrum, for maximum productivity leverage. CoffeeScript is well done and more convenient to use than JS, provided you buy into the Python-esque significant space and the costs of generating JS from another source language. But semantically it’s still JS.

Could JS evolve to be a better “little language” in both surface and substance? Implementors and users will impose some fuzzy but obvious and (past the fuzz) hard limits. JS can’t evolve directly into something too different. For instance, I believe JS implementors on TC39 would reject significant space instead of curly braces, or a mandatory bottom-up parser with disambiguation magic.

Meanwhile, polyfills such as CoffeeScript (when not run as a server-side code generator) may become more widely used, pushing JS in different directions. Still, it will be hard for polyfills to beat native code implementation, <script> tag prefetching, and the other built-into-every-browser advantages of pure JS.

Whatever happens with polyfills, JS’s fitful progress over its life so far suggests that it can evolve further, and significantly. Such evolution requires growth in the short run, to solve the obvious web-imposed problem of backward compatibility.

growing a language

Maturing languages grow even without web-wide compatibility constraints, and JS is no exception. We should continue to grow the language, keeping support for old forms while adding new forms to help users themselves grow the language.

That last link is to a video of Guy Steele’s famous talk. Here’s a cleaned-up transcript. One quote:

If we add hundreds of new things to the Java programming language, we will have

a huge language, but it will take a long time to get there. But if we add just a few

things—generic types, overloaded operators, and user defined types of light weight, for

use as numbers and small vectors and such—that are designed to let users make and add

things for their own use, I think we can go a long way, and much faster. We need to put

tools for language growth in the hands of the users.

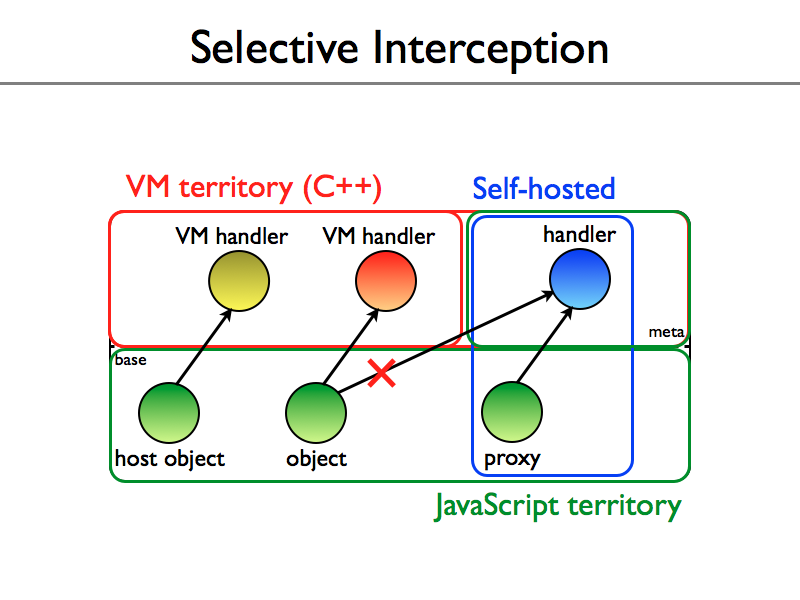

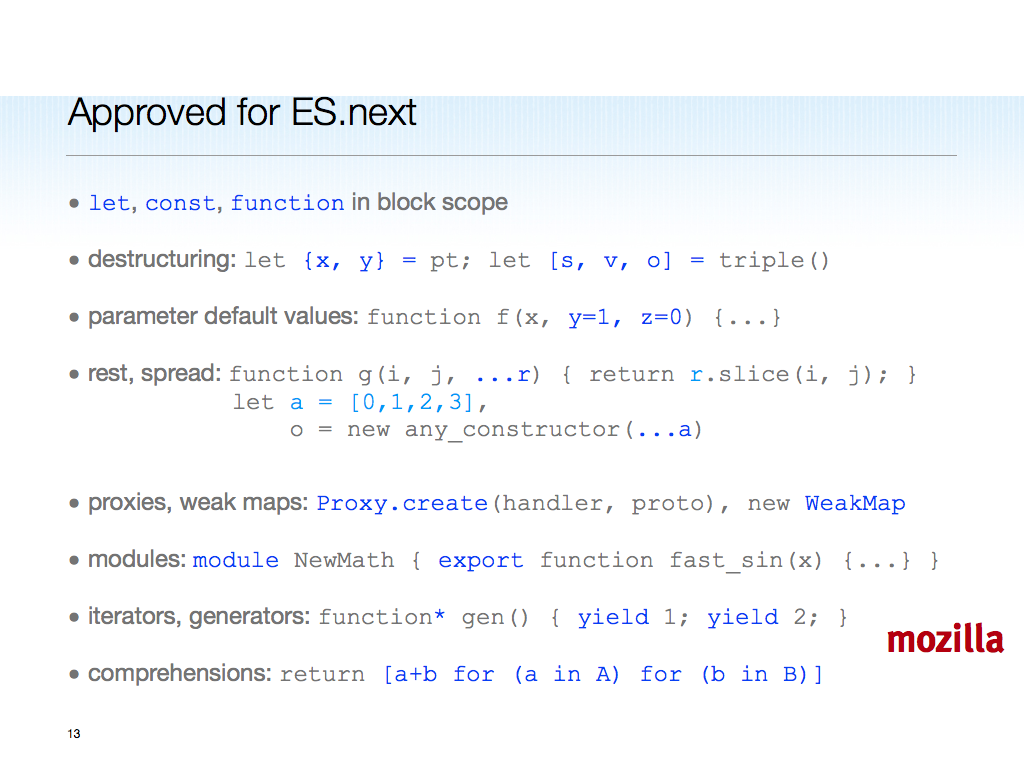

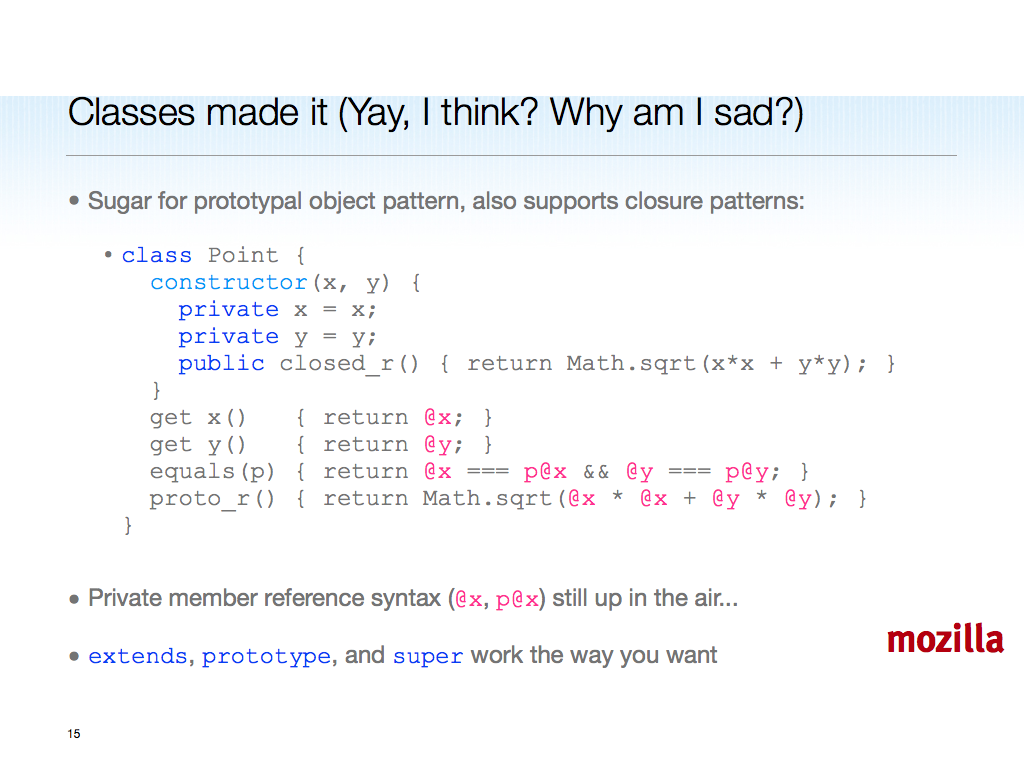

Look past the Java specifics. This applies deeply to JS as well. Empowering users to grow the language is why modules, proxies, binary data, and even an operators/literals/value-types dark horse, are high priorities for Harmony in my view. Which is not to say we shouldn’t add anything else. Because:

I hope that we can, in this way or some other way, design a programming language

where we don’t seem to spend most of our time talking and writing in words of just one

syllable.

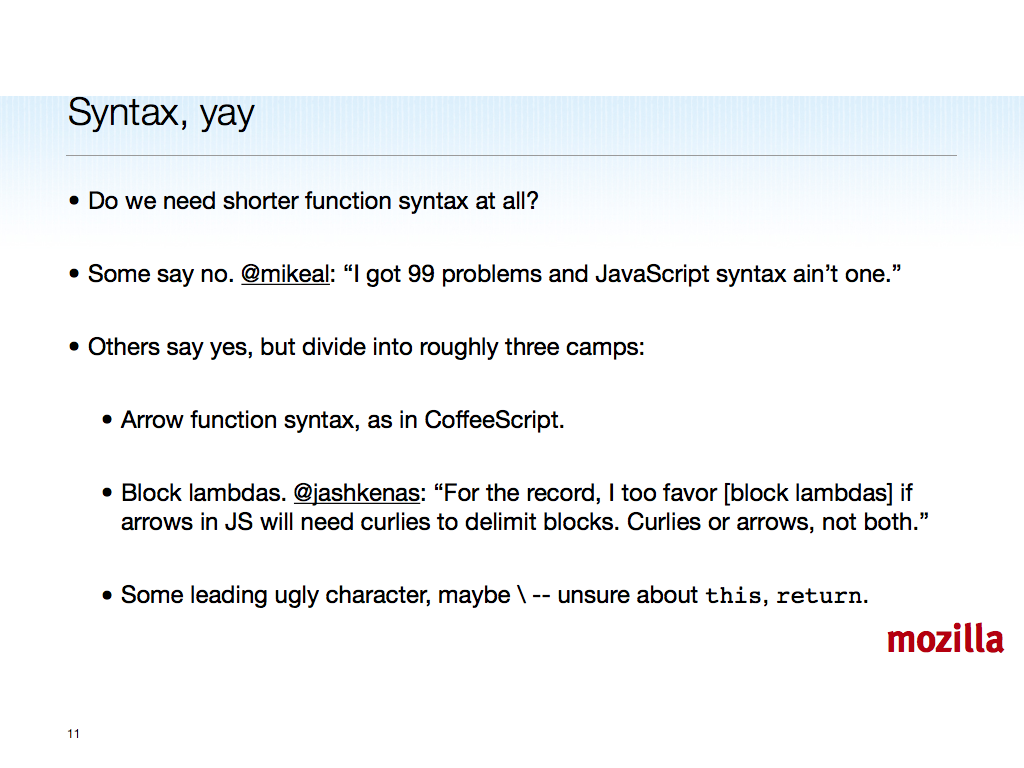

You can do anything with function in JS, but you shouldn’t have to — it over-taxes JS programmers and VM implementors to learn and optimize all the idiomatic patterns. Too much like writing with only one-syllable words.

grow to shrink

If we do this right, Harmony’s kernel semantics do not grow inordinately in complexity. Then users merely have to choose to use the simpler new syntax over the old, and for those users (and possibly for everyone, many years hence — sooner, if you use a translator to “lower” Harmony to JS-as-it-is), JS is in fact more usable and smaller in its critical dimensions.

Beyond users choosing to code in a subset, we could potentially shrink — or not grow, or grow less — the opt-in Harmony language by excluding misfeatures of “classic JS”. This was one of the ideas developed in paren-free and some followup comments: no messy, underspecified, not-quite-interoperable for (i in o) loop, only for i in o loops, comprehensions, and generator expressions, to take one example. ES5 strict mode already removes with. Harmony already proposes to remove the global object as top-most scope, to pick a non-syntactic example.

(Opt-in is required for Harmony because of new syntax. Yet developer brain-print conservation, existing code migration, shared-object-heap interoperation, and browser engine code re-use, all favor keeping Harmony “close” to JS-as-it-is. How close is the question. I’m in favor of pushing this envelope given the inertia of the standards setting and the conservatism of committees. Bear with me if you disagree.)

On the web, the only way to shrink is to grow first. As I put it at jsconf.us last year, provide better carrots to lead the horses away from the rotting vegetables, which can be cleaned up later.

finding harmony

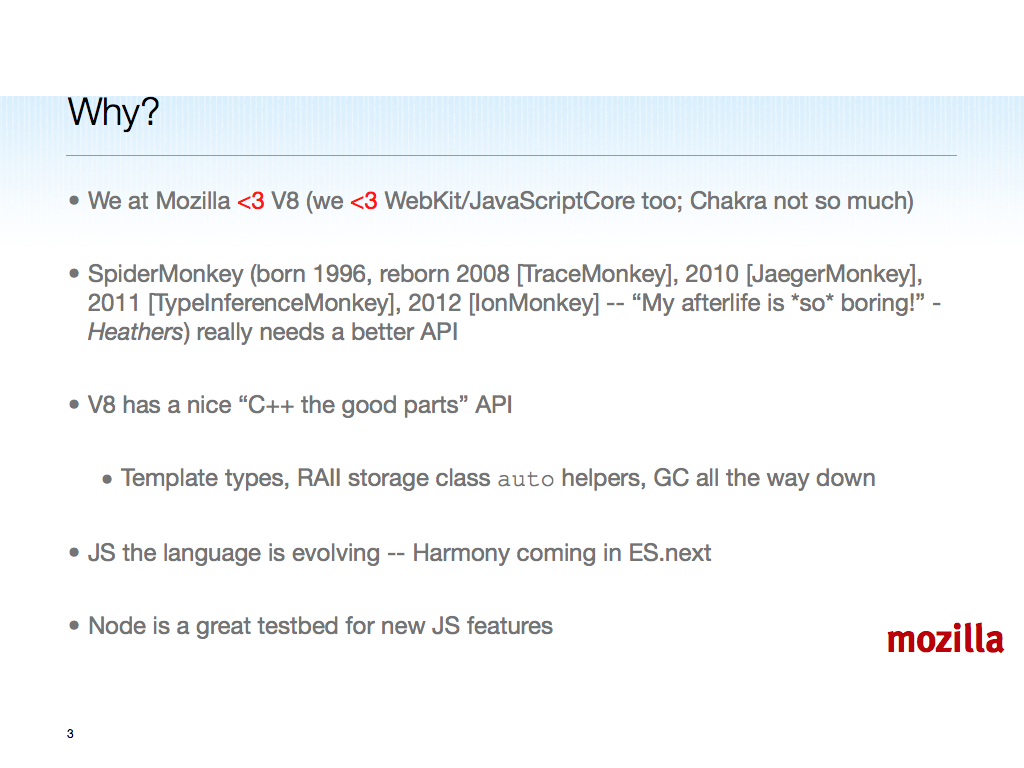

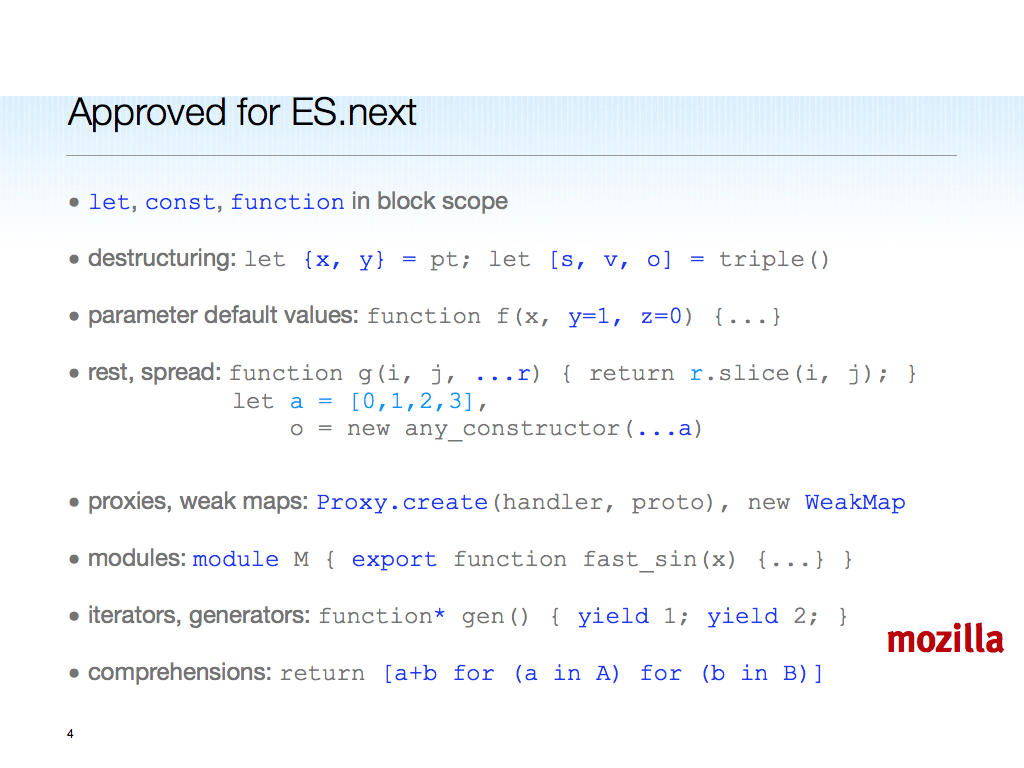

Some of what’s below is already harmonious according to TC39. Some is new, some is not yet proposed. The idea is to give an overview of Harmony that covers all of the high points and adds some new spice, instead of referring true believers to the sprawling wiki and then hoping they can figure things out from recent changes and the discussion list.

Unlike the CoffeeScript docs, I’ll show JS as it is implemented today on the left, and Harmony-of-my-dreams code on the right. This emphasizes how we’re working to fill gaps in the language’s semantics, not simply add sugar that lowers from new syntax to old. There’s nothing wrong with desugaring, and I believe CoffeeScript and other front ends for JS have a bright future, but TC39 is charged with evolving the core language, especially in ways that can’t be done efficiently or at all in today’s JS.

With this context in mind, let’s dive in.

binding and scope

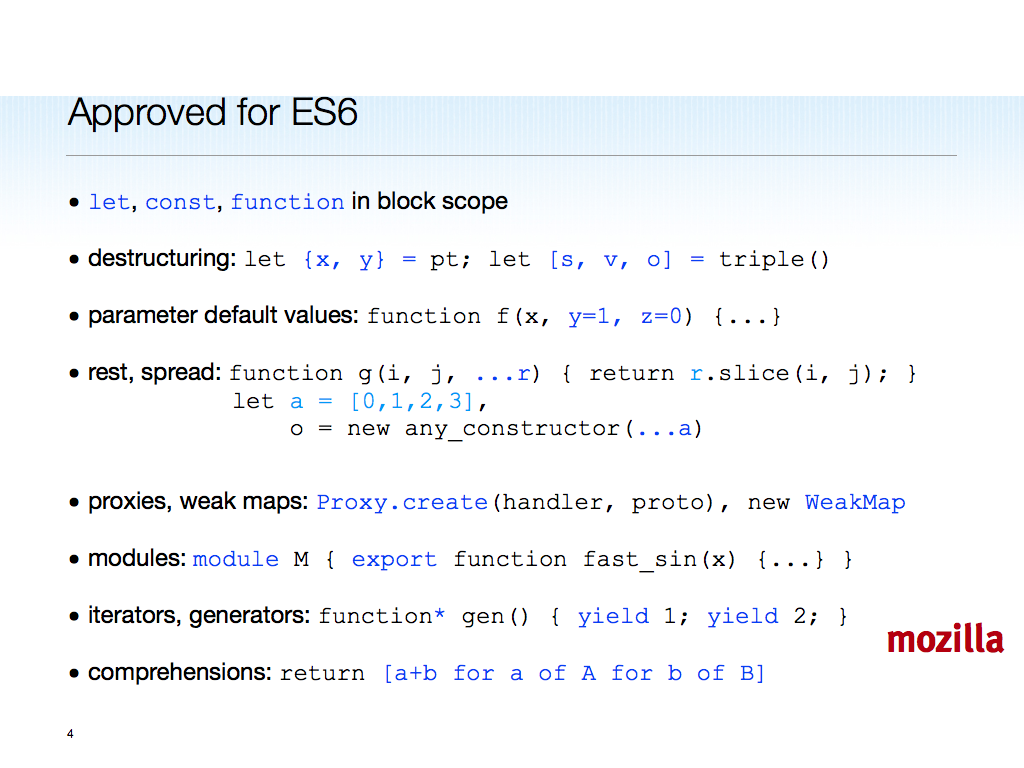

Block scoped let and const, not weird old hoisted (to top of function or script) var. Lexical scope all the way up, no global object on the scope chain. Free variables are early errors.

var still_hoisted = "alas"

var PRETEND_CONST = 3.14

function later(f, t, type) {

setTimeout(f, t, typo) // oops...

}

let block_scoped = "yay!"

const REALLY = "srsly"

function later(f, t, type) {

setTimeout(f, t, typo) // EARLY ERROR

}

Removing the global window object from the scope chain doesn’t mean it won’t be available, though; see modules below.

functions

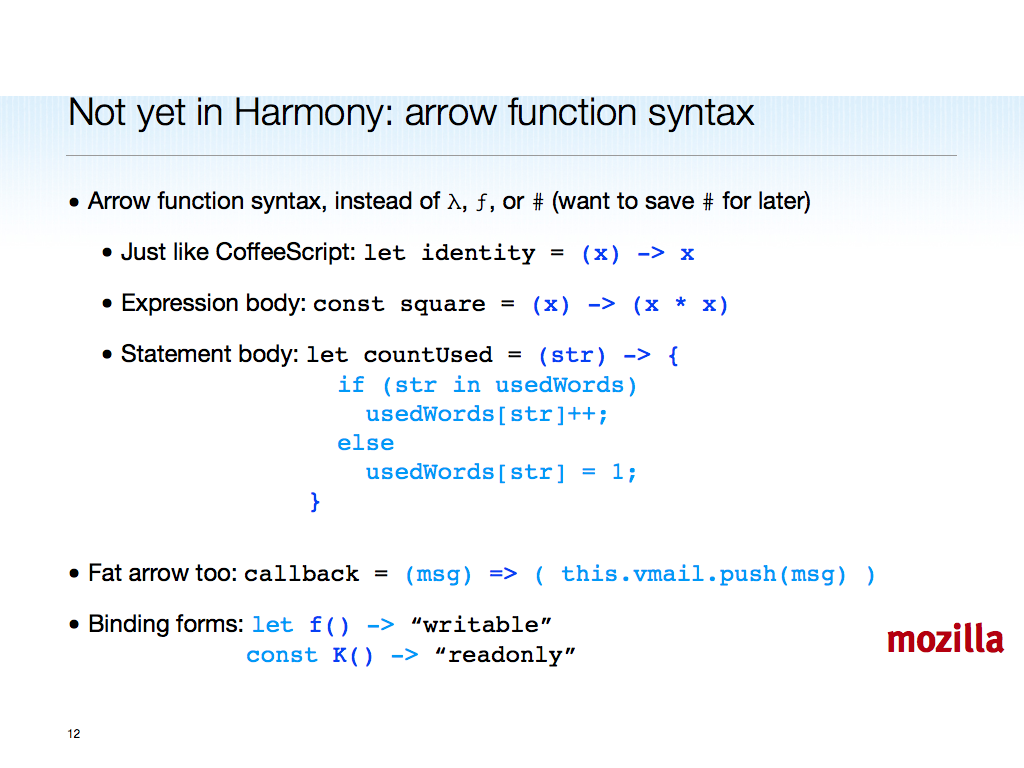

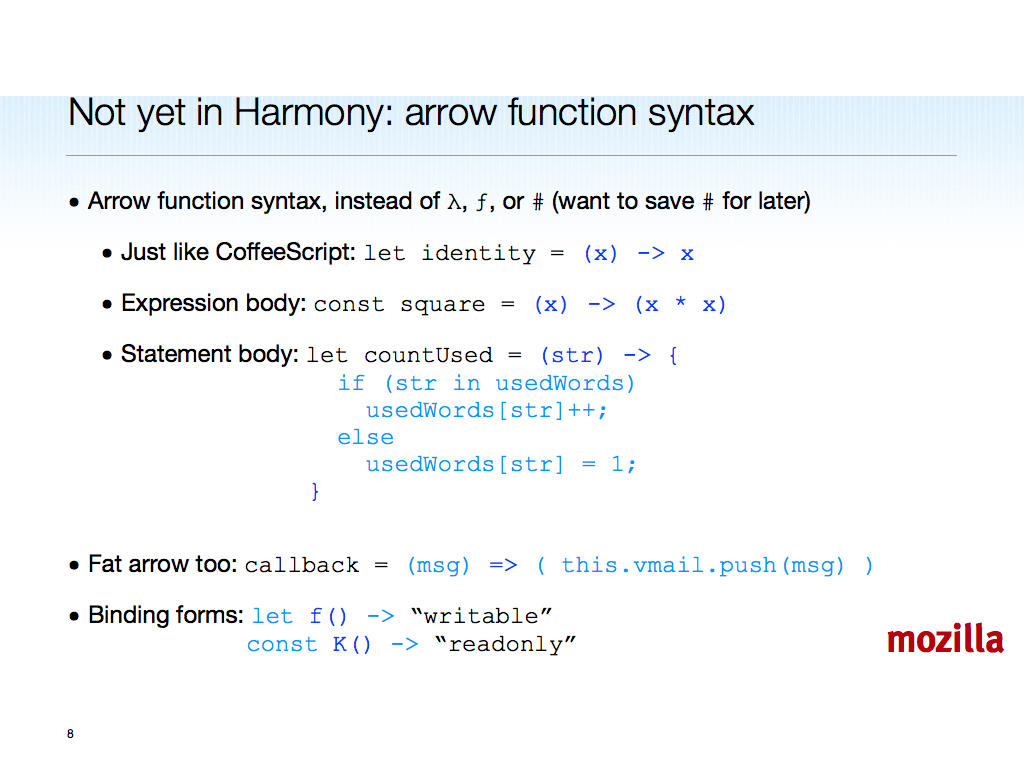

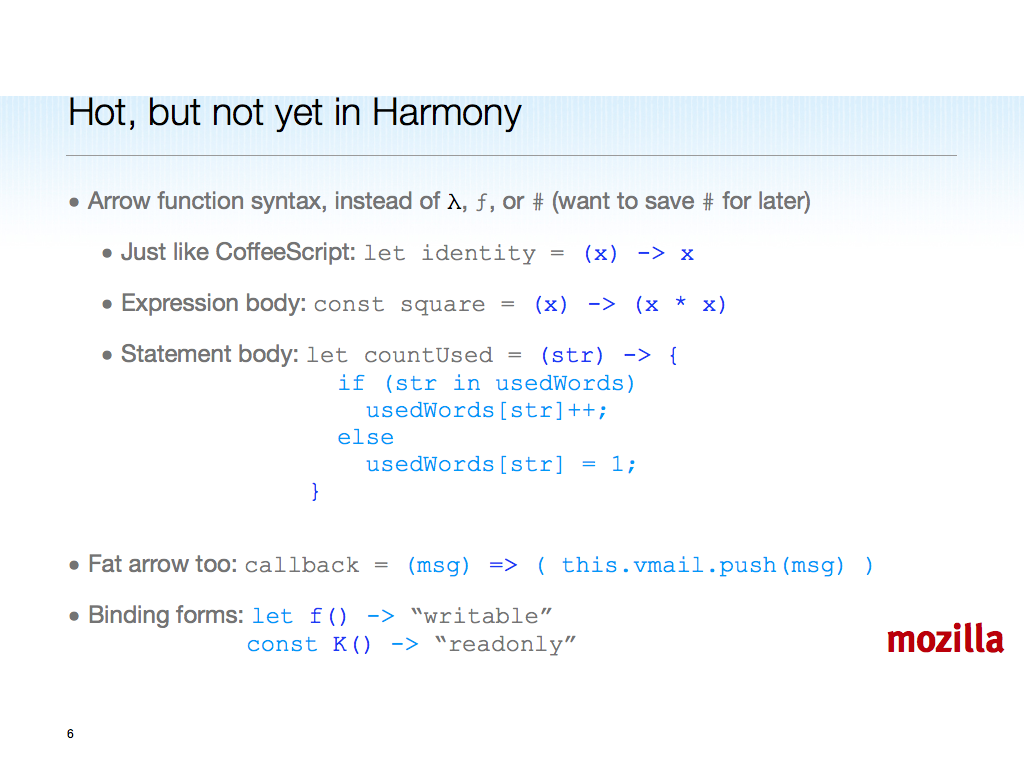

[Presented in the spirit of the Mozilla Apologator:] I’m sorry for picking so long a keyword. Beyond the length of function, and for all the many wins of closures, the objects that result from evaluating function declarations and expressions have some very shaggy hair. Time for a trim, starting with syntax proposed by @Arv and @Alex, extended to work with binding keywords:

function add(a, b) { return a + b }

(function(x) { return x * x })

const #add(a, b) { a + b }

#(x) { x * x }

The # character is one of few ASCII punctuators available. My straw polls on better characters to use has not led to a clear winner, and this one is proposed for Harmony. CoffeeScript’s -> and => seem to require bottom-up parsing, so they’re not going to fly among implementors.

Beyond syntax, notice how the braced body can end in an expression statement that evaluates to the implicit return value. And here’s another difference from functions: #-functions are immutable and joined.

What to call these # functions? Ruby has given up hash rockets. Can JS coin a new hash-phrase: hash-funcs? Suggestions welcome.

We should not call these things lambdas, as that drags in untenable Tennent’s Correspondence Principle strangeness, such as return from a lambda returning from its enclosing function (if still active; otherwise you would get a runtime error).

tail position

The implicit return value does more than save six characters plus one space (relieving you of having to type return ), it also makes the last expression statement be in tail position. This contrasts with a JS function, which has an implicit return undefined; at the end of its body.

function cps(x) { not_tail(x) }

function cps_harder(x) {

work(x)

return tail(x)

}

const #cps_smarter(x) {

work(x)

tail()

}

At his JSConf.eu talk last fall, @Crock promoted the idea of proper tail calls, something we have wrestled with in TC39 since ES4 days. Tail calls are a feature of Scheme, providing an asymptotic space guarantee (in plain English, you can tail-call without growing the call stack inevitably to entrain the space for all args and vars active along the dynamic call chain).

I agree with Doug that tail calls would be a win, especially with evented code. The # function syntax allows us to give tail calls a boost and save you seven (14 total: function + return_ – #) keystrokes.

Some have objected that this creates an unintended completion value leak-hazard, but (a) we can improve the Harmony definition of completion value in #-functions, (b) the void operator I added for javascript: URLs back in ’95 stands ready, and (c) when in doubt, use function syntax or write an explicit return.

no arguments

The arguments object is another clump of hair to trim from functions in adding hash-funcs. With Harmony, we have rest parameters, so we don’t need no steenking arguments!

(function () {

return typeof arguments

})() == "undefined"

#() {

typeof arguments

}() == "undefined"

This haircut makes life simpler for web developers; it means even more to JS implementors.

lexical this

Another Tennent’s Correspondence Principle casualty: this default binding. When you call o.m() in JS, unless m is a bound method, this must bind to o. But for all functions in ES5 strict mode, and therefore in Harmony (based on ES5 strict), this is bound to undefined when the function is called by its lexical name (f, not o.m for o.m=f).

Binding this to undefined censors the global object, a capability leak. Apart from that fix, though, passing undefined as this is nearly useless.

Why not bind this to the same value as in the code enclosing the hash-func? Doing so will greatly reduce the need for var self=this or Function.prototype.bind, especially with the Array extras.

function writeNodes() {

var self = this

this.nodes.forEach(function(node) {

self.write(node);

}

}

function writeNodes() {

this.nodes.forEach(#(node) {

this.write(node);

}

}

It’s great that ES5 added bind as a standard method, but why should you have to call it all over the place? If Harmony does not address this issue, I will count that a failure.

records

A hot-button issue with ES5: Object.freeze. Whose side are you on, Batman’s or Mr. Freeze’s? Simplistic to say the least, since even in one’s own small-world codebase, making some things immutable protects against mistakes. Never mind single- and multi-threaded data sharing and other upside.

However, calling an Object method around every object initialiser you want frozen is a drag, and even then, the JS engine has to stand on its head and spin around to figure out that all the many evaluations of such an expression could be shared (but for the violation of object identity, detectable via === and !==, that sharing would create; but that could be optimized too, at some further expense).

With # we can do better:

var point = {x: 10, y: 20}

point.equals({x: 10, y: 20})

const point = #{x: 10, y: 20}

point === #{x: 10, y: 20}

Not only does the # sigil allow us to create records that are hash-cons‘ed so there is only one object identity per nearest containing relevant closure; we also get == and != (and the triple-equals forms) for free. Object content-based equality.

tuples

You may have noticed a trend here. Gaps in the JS language in usability, semantic unity of purpose, and reliability or invariance, can be made up for by adding “hash forms” that are shorter or still short enough, better for optimization, and free from mutation hazards and other historic hair. The same goes for Arrays, via tuples:

var tuple = [1, 2, 3]

tuple[tuple.length-1] === 3

Array.prototype.compare = /*...*/

tuple.slice(0, 2).compare([1, 2]) == 0

tuple.compare([1, 2, 4]) < 0

const tuple = #[1, 2, 3]

tuple[-1] === 3

tuple[0:2] === #[1, 2]

tuple < #[1, 2, 4]

Not only are the equality operators (strict and loose) based on contents and not object identity (which is not material due to the implicit freezing of these array-like objects), tuples support relational operators (< <= > >=), again based on contents not identity.

Relationals could work on records too, using enumeration order (assuming we standardize that order sanely).

Negative indexing, something unlikely to be grafted onto Array in Harmony (see “shared-object-heap interoperation” point above), is more than a minor convenience in my book. It’s also something Harmony Proxy handlers can implement. So tuples should support negative indexing even if arrays do not. And it ought to be cheap to create a tuple from an Array instance.

If negative indexing works, can slices and ranges be far behind? I’ll leave those for another post.

Array.prototype is full of generic methods, most of which do not mutate this. It’s tempting to want tuples to delegate to Array.prototype, with optimizations possible (as fast engines do today for dense-enough arrays). I’ll throw this idea out and confess I haven’t thought through every corner of it.

One known bug in the Array.prototype methods that construct a new array object, e.g. slice: they always make an Array instance, instead of calling new this.constructor. I agree with @Alex that we ought to fix this bug in Harmony.

statements

Here I recap paren-free, which I have prototyped in Narcissus (invoked via njs --paren-free), but with an obvious and convenient extension:

if (x > y) alert("brace-free")

if (x > z) return "paren-full"

if (x > y) f() else if (x > z) g()

if x > y { alert("paren-free") }

if x > z return "brace-free"

if x > y { f() } else if x > z { g() }

We do not want else clauses to be braced no matter what. In particular, an if statement as the else clause should not be braced, to avoid rightward indentation drift. Therefore any statement that starts with a keyword need not be braced. This is a boon for short break, continue, return, and throw statements often controlled by if heads that guard uncommon conditions.

The simple modules proposal, along with its module loaders adjunct, is the likely Harmony module system solution. Note that there is no left-hand side example written in current JS below — you’d need a preprocessor, not part of the language.

module M {

module N = "https://N.com/N.js"

export const K = N.K

export #add(x, y) { x + y }

}

Modules are being prototyped in Narcissus by @little_calculist right now. More on this at the end.

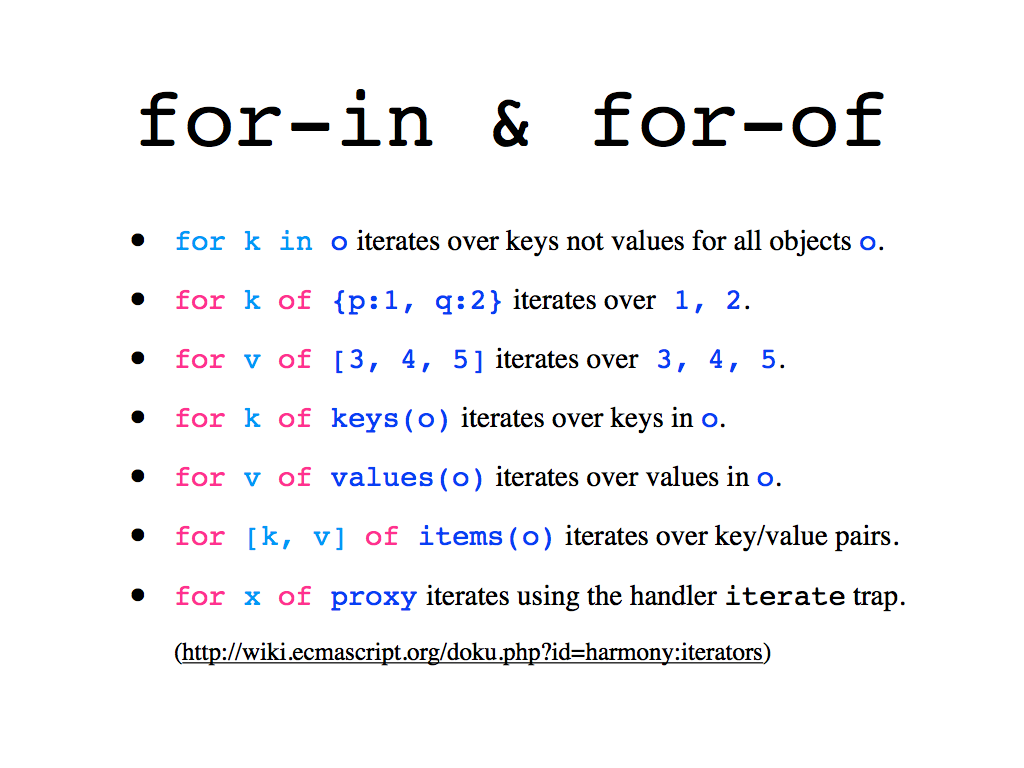

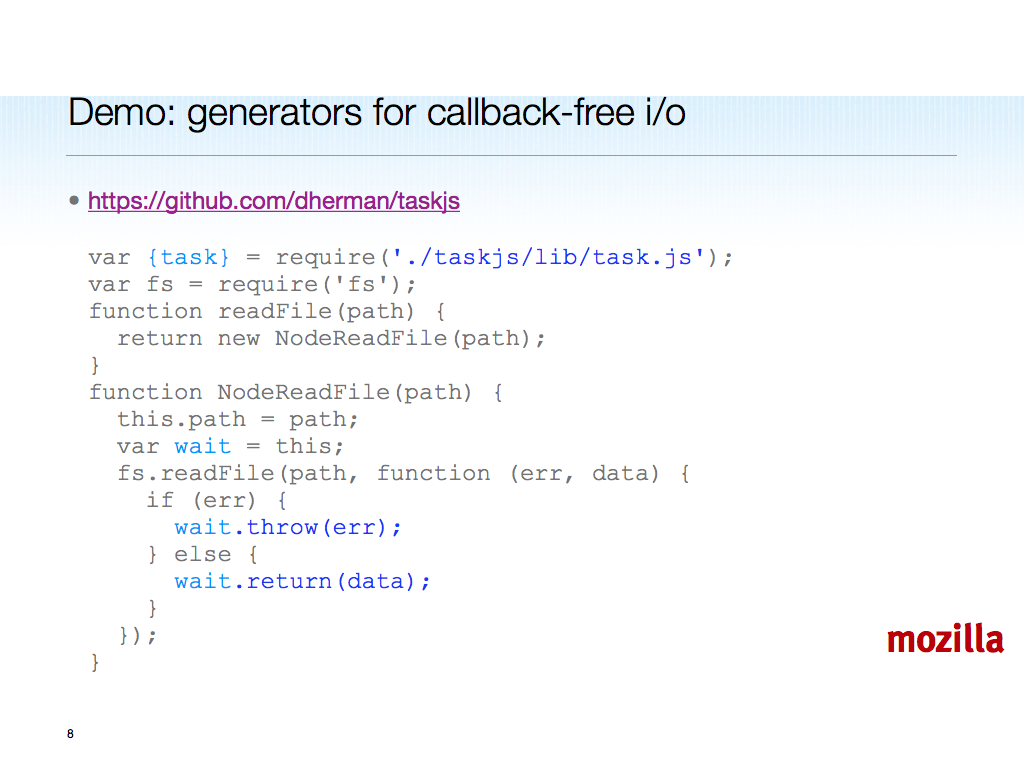

iteration

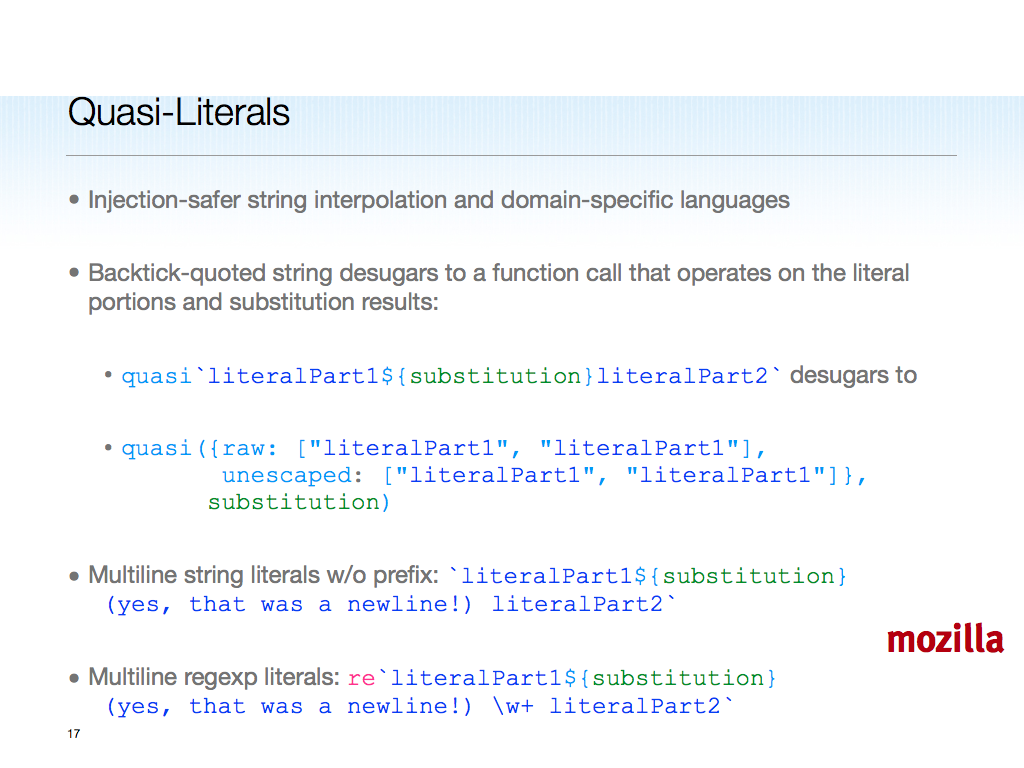

The impetus for paren-free was the poor old for-in loop. I propose we break it utterly by requiring an unparenthesized head sporting implicit let binding, and the “always use the one true iteration protocol” semantics. Also, generators based on JS1.7, based on Python. All of this is pretty much as in Python, but built on proxies.

for (var k in o) append(o[k])

module Iter = "@std:Iteration"

import Iter.{keys,values,items,range}

for k in keys(o) { append(o[k]) }

for v in values(o) { append(v) }

for [k,v] in items(o) { append(k, v) }

for x in o { append(x) }

#sqgen(n) { for i in range(n) yield i*i }

return [i * i for i in range(n)]

return (i * i for i in range(n))

Migrating for-in loops into Harmony will require saying what you mean.

That "@std:Iteration"module resource locator is something I made up. It’s an “anti-URL” since it starts with @. The idea is to be able to name built-in modules without having to write URLs, and without colliding with any possible URL. You could imagine "@dom" too.

Instead of the bad-smelling arguments object, Harmony boasts parameter default values (not shown here) and rest parameters.

function printf(format) {

var args = Array.prototype.slice

.call(arguments,1)

/* use args as a real array here */

}

function printf(format, ...args) {

/* use args as a real array here */

}

With hash-funcs, default parameter values and rest parameters are all you get — no more arguments.

Should tuples become harmonious, the question arises: how does a rest parameter reflect, as an array or as a tuple? The answer may depend on how important it is to splice, reverse, or sort a rest parameter. VM implementors would love the frozen tuple answer. Most JS hackers who cared would, I suspect, favor array over tuple here.

spread

A companion to rest parameters, the spread syntax allows one to expand an array’s elements as positional parameters or array initialiser elements. Finally you can write a generic constructor-invoking helper without using switch and eval:

function construct(f, a) {

switch (a.length) {

case 0: return new f

case 1: return new f(a[0])

case 2: return new f(a[0], a[1])

default:

var s = "new f("

for (var i = 0; i < a.length; i++)

s += "a[" + i + "],"

s = s.slice(0, -1) + ")"

return eval(s)

}

}

function construct(f, a) {

return new f(...a)

}

Even without the 0, 1, and 2 special cases, this significant savings in lines screams "semantic gap being filled!"

UPDATE: @markm emailed to remind me that ES5 fills the gap part-way, at the price of a bound function:

function construct(f, a) {

var ctor = Function.prototype.bind

.apply(f, [null].concat(a))

return new ctor();

}

function construct(f, a) {

return new f(...a)

}

ES5 helps, but I think it is time to prototype Harmony's spread for Firefox.next.

destructuring

Often in JS you'll find yourself unpacking the properties of an object into same-named variables. Destructuring binding and assignment (prototyped since 2006 in JS1.7 in Firefox) fill this gap:

var first = sequence[0],

second = sequence[1]

var name = person.name,

address = person.address

// no easy misc solution

let [first, second] = sequence

const {name, address, ...misc} = person

The destructuring patterns mimic object and array initialisers, and raise the possibility of refutable matching in JS.

library missing links

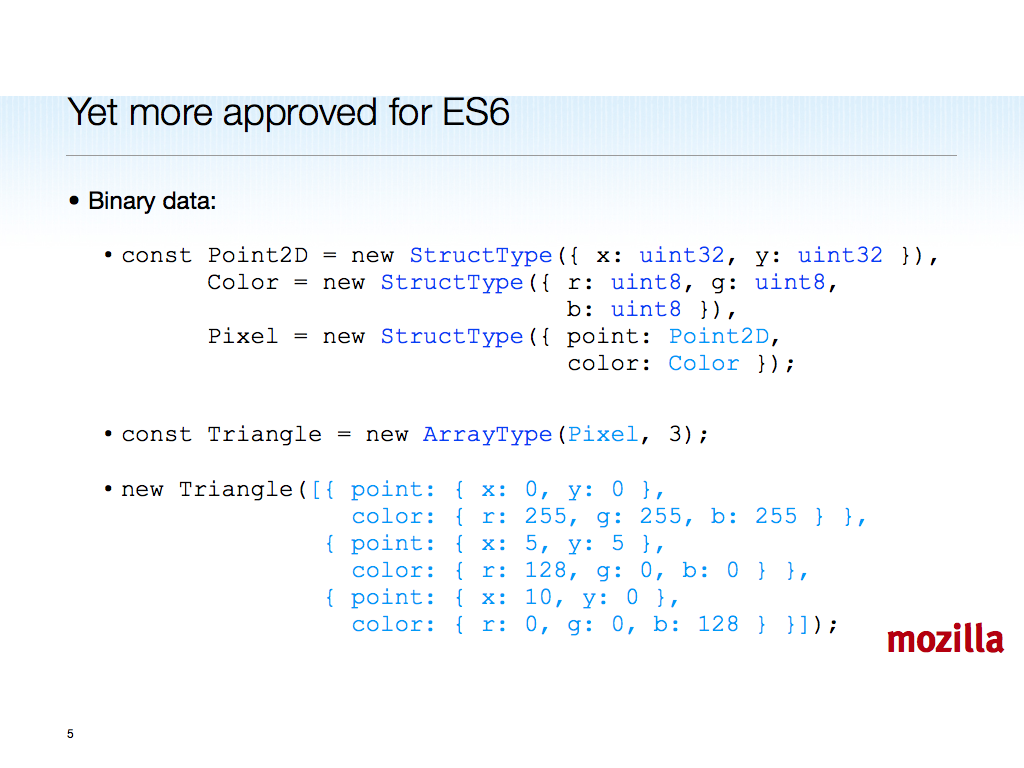

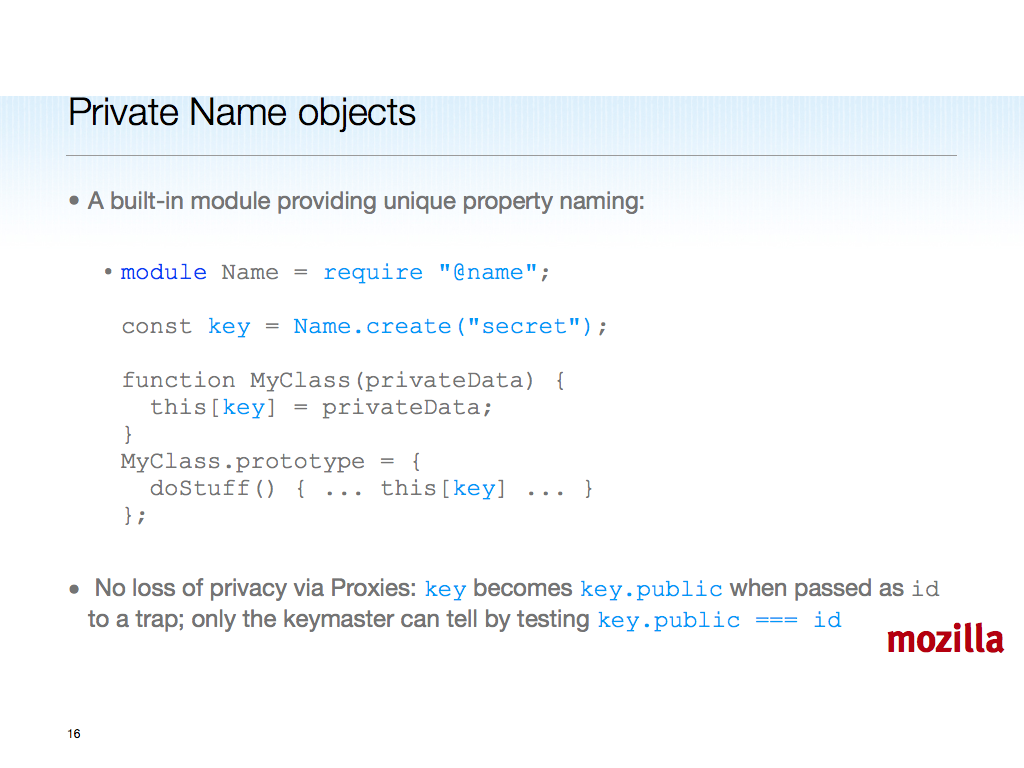

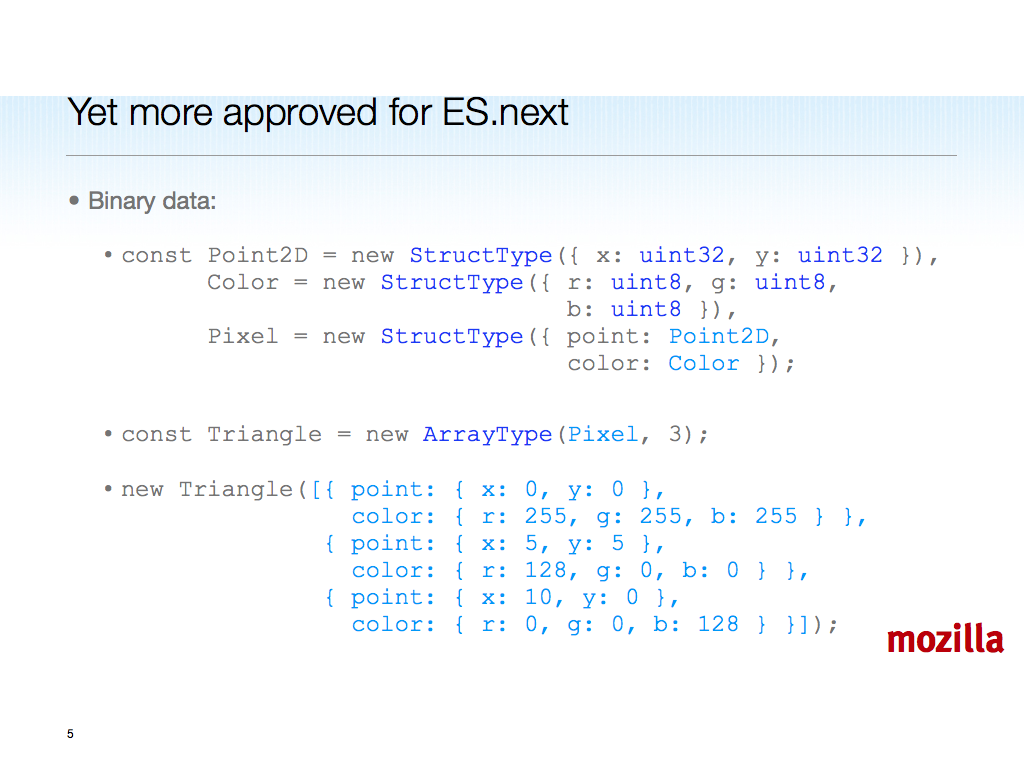

Array.create, Function.create (like Function but with a leading name parameter), binary data, proxies, and weak maps.

Array.create(proto, [1, 2, 3]) // see also Array.createConstructor

Function.create(name, ...params, body);

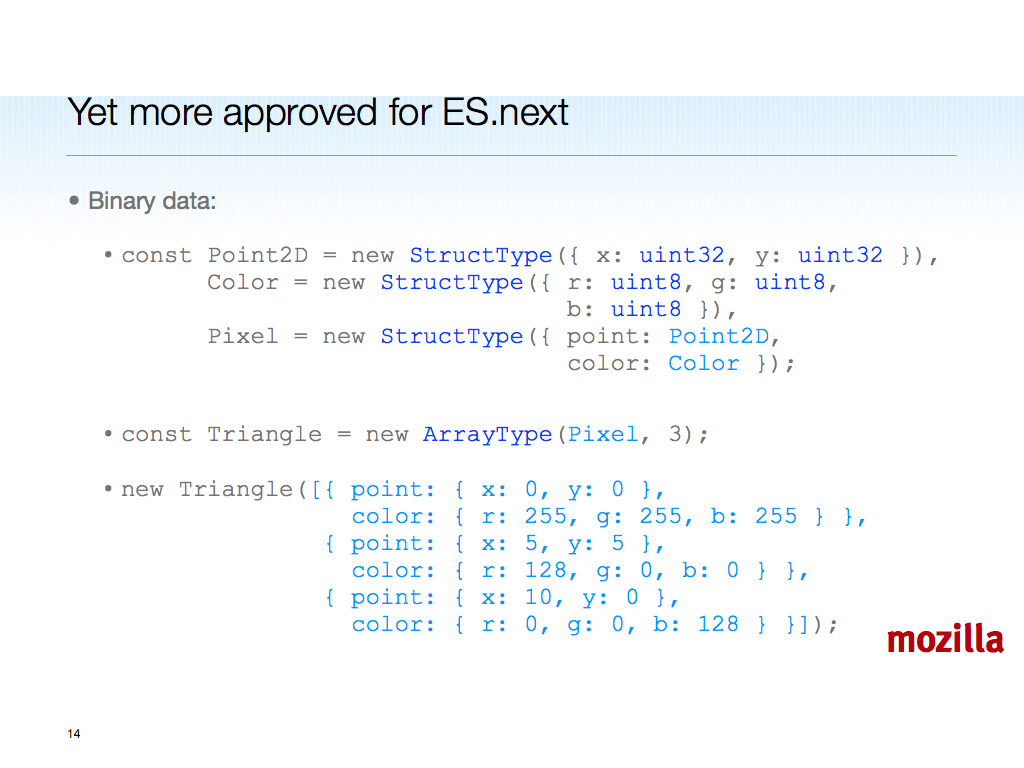

const Point2D = new StructType({ x: uint32, y: uint32 });

const Color = new StructType({ r: uint8, g: uint8, b: uint8 });

const Pixel = new StructType({ point: Point2D, color: Color });

const Triangle = new ArrayType(Pixel, 3);

Proxy.create(handler, proto)

Proxy.createFunction(handler, call, construct)

const map = WeakMap()

map.set(obj, value)

These are just the big dogs in the Harmony standard library kennel, but worth some attention.

In particular, proxies really want weak maps. Weak maps are something JS has needed for ages in general. Have you ever kept objects in an array and searched by object identity, or else mutated objects to assign hashcodes to them? No more.

Binary data looks insanely useful, and we hope it will supplant WebGL typed arrays in due course.

closing

This is a long post. If you made it this far and take away anything, I hope it is Guy's "Growing a Language" meta-point. JS will be around for a very long time, and it has a chance to evolve until its users can replace TC39 as stewards of its growth. The promised land would be macros, for syntactic abstraction and extensibility. I am not holding my breath, but even without macros, the Harmony-of-my-dreams sketched here would be enough for me.

We aim to do more than dream. Narcissus is coming along nicely since it moved to github and got a shot in the arm from our excellent Mozilla Research interns last summer. We are prototyping Harmony in Narcissus (invoked via njs -H), so you can run it as an alternate <script> engine via the Zaphod Firefox add-on.

@andreasgal has a JS code generator for Narcissus in the works, which promises huge speedups compared to the old metacircular interpreter I wrote for fun in 2004. With good performance, we can actually do some usability studies of Harmony proposals, and avoid Harmony-of-our-nightmares: untested, hard-to-use committee designs.

A code-generating Narcissus has other advantages than performance. Migrating code into Harmony, what with the removal of the global object as top scope (never mind the other changes I'm proposing -- here's another one: let's fix typeof), needs automated checking and even code rewriting. DoctorJS uses a static analysis built on top of Narcissus, which could be used to find flaws, not just migration changes. Self-hosted parsing, JS-to-JS code generation, and powerful static analysis come together to make a pretty handy Harmonizer tool. So we're going to build that, too.

More on Narcissus and Zaphod as they develop. When the time is right, we will need users -- lots of them. As always, your comments are welcome.

/be

(h/t

(h/t